TensorFlow 2.0 Tutorial 05: Distributed Training across Multiple Nodes

Distributed training allows scaling up deep learning task so bigger models can be learned or training can be conducted at a faster pace. In a previous ...

Published on by Chuan Li

Distributed training allows scaling up deep learning task so bigger models can be learned or training can be conducted at a faster pace. In a previous ...

Published on by Chuan Li

During training, weights in the neural networks are updated so that the model performs better on the training data. For a while, improvements on the training ...

Published on by Chuan Li

This tutorial combines two items from previous tutorials: saving models and callbacks. Checkpoints are saved model states that occur during training. With ...

Published on by Chuan Li

This tutorial shows you how to perform transfer learning using TensorFlow 2.0. We will cover:

Published on by Stephen Balaban

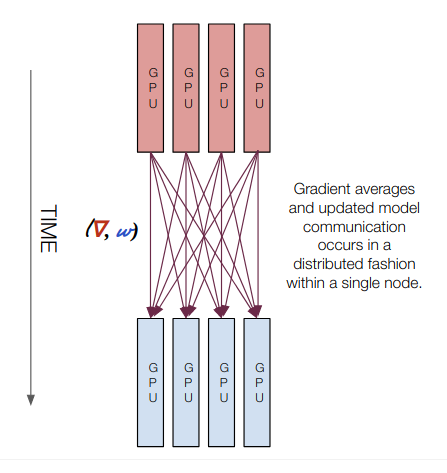

This presentation is a high-level overview of the different types of training regimes that you'll encounter as you move from single GPU to multi GPU to multi ...

Published on by Michael Balaban

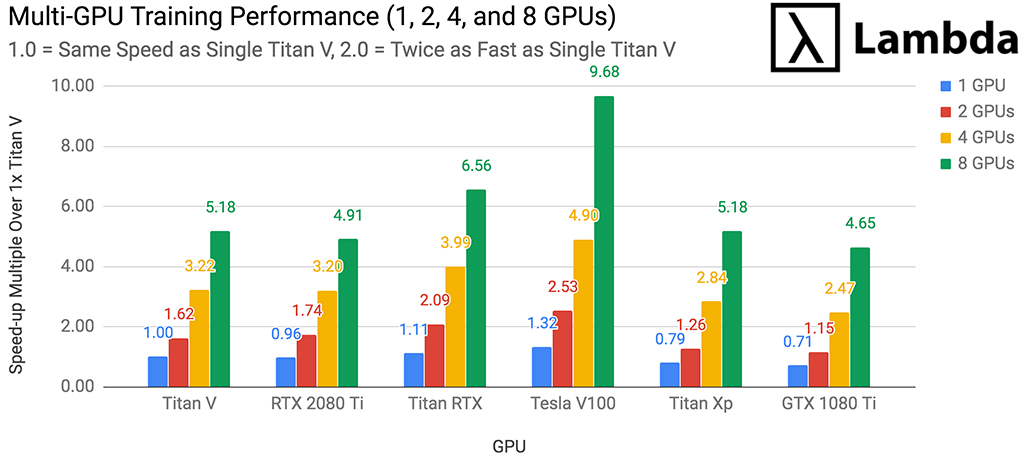

In this post, Lambda Labs benchmarks the Titan V's Deep Learning / Machine Learning performance and compares it to other commonly used GPUs. We use the Titan V ...

Published on by Stephen Balaban

by Chuan Li, PhD

Published on by Stephen Balaban

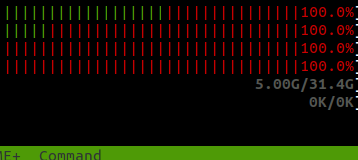

CPU, GPU, and I/O utilization monitoring using tmux, htop, iotop, and nvidia-smi. This stress test is running on a Lambda GPU Cloud 4x GPU instance. Often ...

Published on by Stephen Balaban

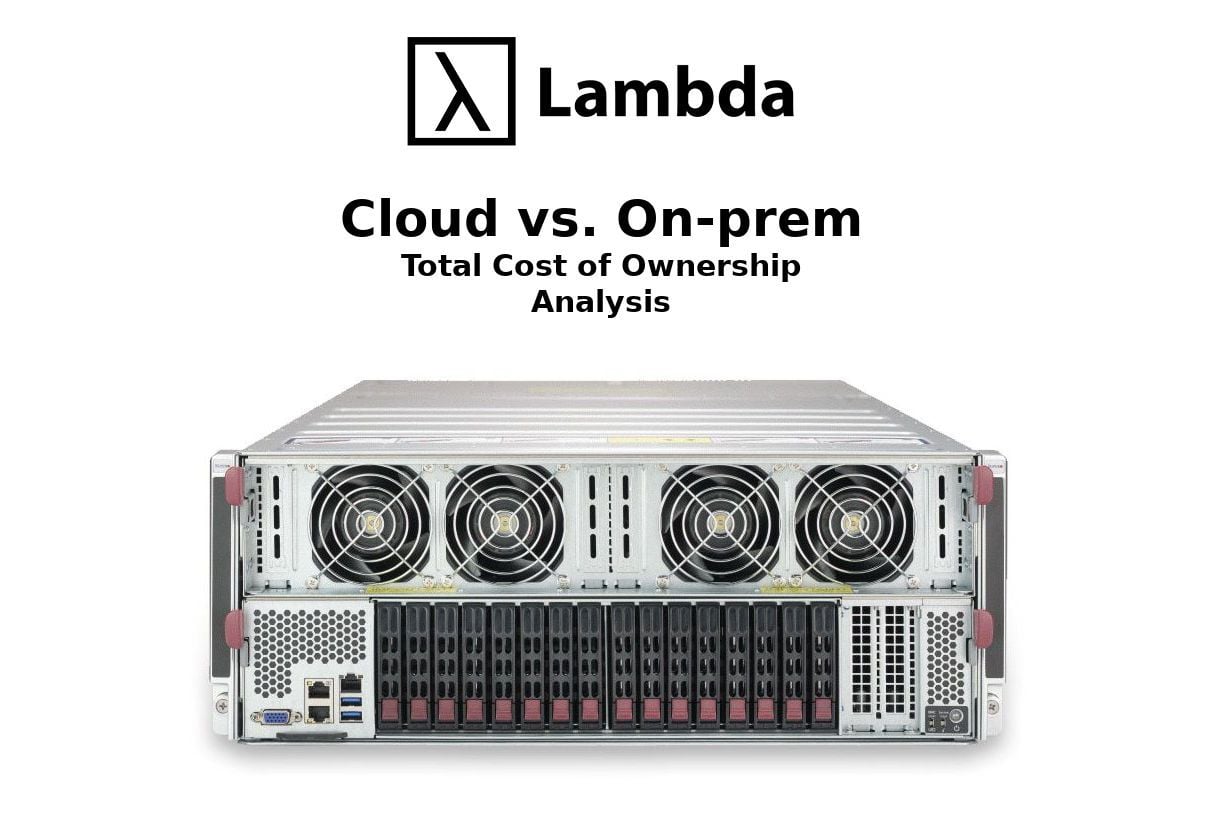

Update June 5th 2020: OpenAI has announced a successor to GPT-2 in a newly published paper. Checkout our GPT-3 model overview.

Published on by Chuan Li

Deep Learning requires GPUs, which are very expensive to rent in the cloud. In this post, we compare the cost of buying vs. renting a cloud GPU server. We use ...

Published on by Stephen Balaban

You were probably thinking that this was going to be a long post. You're in luck. All you need to do is to install Ubuntu 18.04 and then Lambda Stack. Here's ...

Published on by Stephen Balaban

Or, how Lambda Stack + Lambda Stack Dockerfiles = GPU accelerated deep learning containers

Published on by Chuan Li

This tutorial is about making a character-based text generator using a simple two-layer LSTM. It will walk you through the data preparation and the network ...

Published on by Chuan Li

BERT is Google's pre-training language representations which obtained the state-of-the-art results on a wide range of Natural Language Processing tasks. ...

Published on by Stephen Balaban

These slides are from my talk at Rework Deep Learning Summit 2019.