Introducing Lambda's Cloud Metrics Dashboard: Real-Time Insights for Your GPU Workloads

AI doesn’t wait and neither should real-time insights into your infrastructure!

Published on by Anket Sah

AI doesn’t wait and neither should real-time insights into your infrastructure!

Published on by Anket Sah

Say goodbye to storage gymnastics. Say hello to S3 simplicity.

Published on by Cory Lunn

We recently dropped a new Security page, consolidating our existing information in one spot; but we didn't stop there: we partnered with Safebase by DRATA to ...

Published on by Anket Sah

Introducing Managed Slurm (Early Preview) on Lambda: Your AI Cluster’s New Best Friend Think of Slurm as the air‑traffic controller for your GPU fleet that ...

Published on by Anket Sah

A Fresh Take on AI Endpoints The DeepSeek V3-0324 endpoint is here and it’s just an API key away with lightning fast responses, and up to 128K massive context ...

Published on by Amit Kumar

Ensuring our customers’ success is a core value at Lambda, and MLPerf Inference v5.0 is a part of our commitment to providing the best compute platform for AI ...

Published on by Thomas Bordes

Lambda: enabling the AI community & innovating since 2012 Few AI companies have a story like Lambda's. From facial recognition research supported by a ...

Published on by Sam Khosroshahi

Lambda is honored to be selected as an NVIDIA Partner Network (NPN) 2025 Americas partner of the year award winner in the category of Healthcare. This ...

-1.png)

Published on by Maxx Garrison

1-Click Cluster with NVIDIA HGX B200 NVIDIA Blackwell architecture is live on Lambda Cloud with our 1-Click Clusters now featuring NVIDIA HGX B200 in addition ...

Published on by Maxx Garrison

This blog was co-authored by Inaki Madrigal, Solutions Architect, CSP, NVIDIA

Published on by Thomas Bordes

Remember that feeling each time a character in a movie, a play or a book is presented with a golden ticket - or its symbolic equivalent? Yeah, me too. Well, ...

Published on by Thomas Bordes

Lambda is excited to launch a new Security page, designed to be your go-to resource for understanding our commitment to safeguarding your data, as well as ...

Published on by Luke Miles

Guest post from Luke Miles, a San-Francisco -based engineer who develops an MLP training accelerator (https://ohmchip.com/). We've invited him to share the ...

Published on by Stephen Balaban

Artificial intelligence is restructuring the global economy, redefining how humans interact with computers, and accelerating scientific progress. AI is the ...

Published on by Baseten

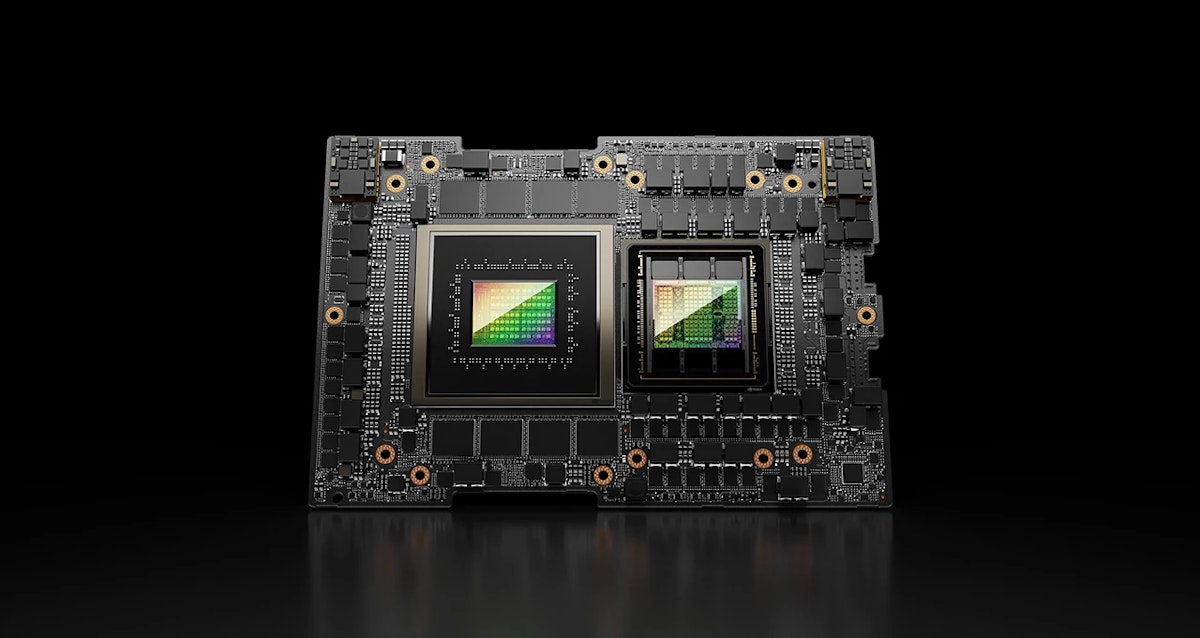

The NVIDIA GH200 Grace Hopper™ Superchip is a unique and interesting offering within NVIDIA’s datacenter hardware lineup. The NVIDIA Grace Hopper architecture ...